The inaugural ‘Max Weaver Lecture’ was organised in recognition of the contributions Max Weaver has made to legal education at London South Bank University. The evening featured a discussion with Max, alongside an illuminating lecture from Dr Joshua Jowitt, Senior Lecturer at Newcastle University.

Max Weaver on teaching law at London South Bank University

Max Weaver’s Q & A covered his impressive career in education, and the motivations guiding his creation of the LSBU’s Law degree. Max Weaver was appointed to the Head of Law at the LSBU aged 26, while initially overwhelmed by the sudden promotion, he was driven to create a course which reflected the “living, breathing, moving and controversial” nature of the law. Max’s degree favours practical learning over dissecting law textbooks, for instance, in the Land Law module students are sent to Lambeth Housing Council to witness housing law in action. A particularly popular feature of the degree is the Legal Advice Clinic, through which students can volunteer advice to the public, and use their legal knowledge to create meaningful change. Max reflected that not all his ideas were successful, noting one unpopular decision to teach Contract Law backwards, starting with remedies: This decision he quickly reversed the next year. He closed his speech with the hope that students would recognise that learning the law is an education in its fullest sense, and not just an exercise in achieving good grades.

Does My Bot Have Rights? – Dr Joshua Jowitt

Dr Joshua Jowitt’s lecture fully embodied the spirit of Max Weaver’s introduction, delivering a thought-provoking presentation on the extent to which artificial bodies may be entitled to rights.

Part 1 – Theoretical Background

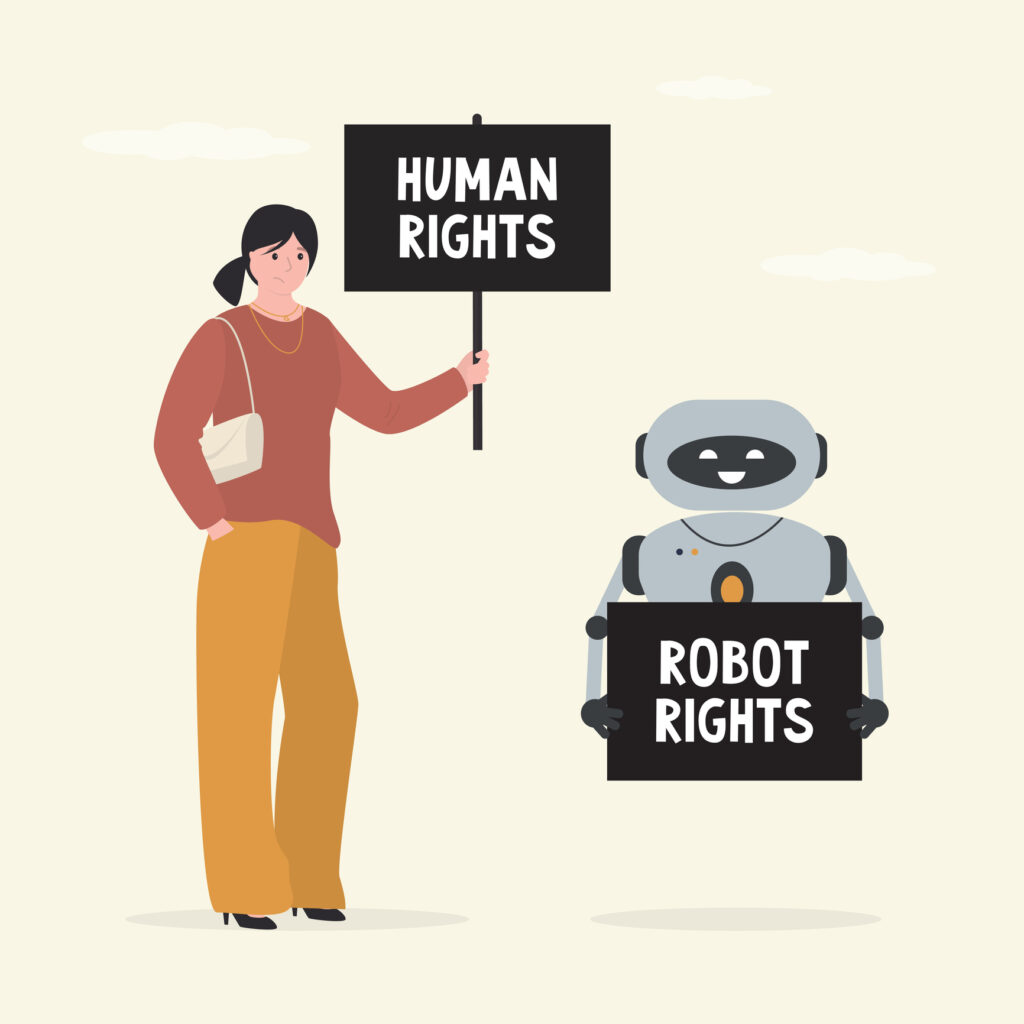

Dr Jowitt’s lecture began with a photo of police brutality from his home county of South Yorkshire. The picture depicts a policeman swinging a baton at an unarmed woman, taken in the context of widespread police violence during the 1984-5 UK miners’ strike. Jowitt explained that viewing the image, aged 16, inspired his first engagement with the principles of law, leading him to pursue a legal education. Noting ‘each of us are taught the law from our own starting points’, he explained how the photo was a medium through which he unknowingly encountered the concept of ‘Natural law’. Natural law resists the notion that the law is a social fact, and promotes an alternative theory – that higher moral principles guide legal decisions. In the picture, the arbitrary, but legal, exercise of force by a policeman over an unarmed woman was at odds with Jowitt’s understanding of the moral principles which governed the law: it provided an analogy for natural law and the law in practice.

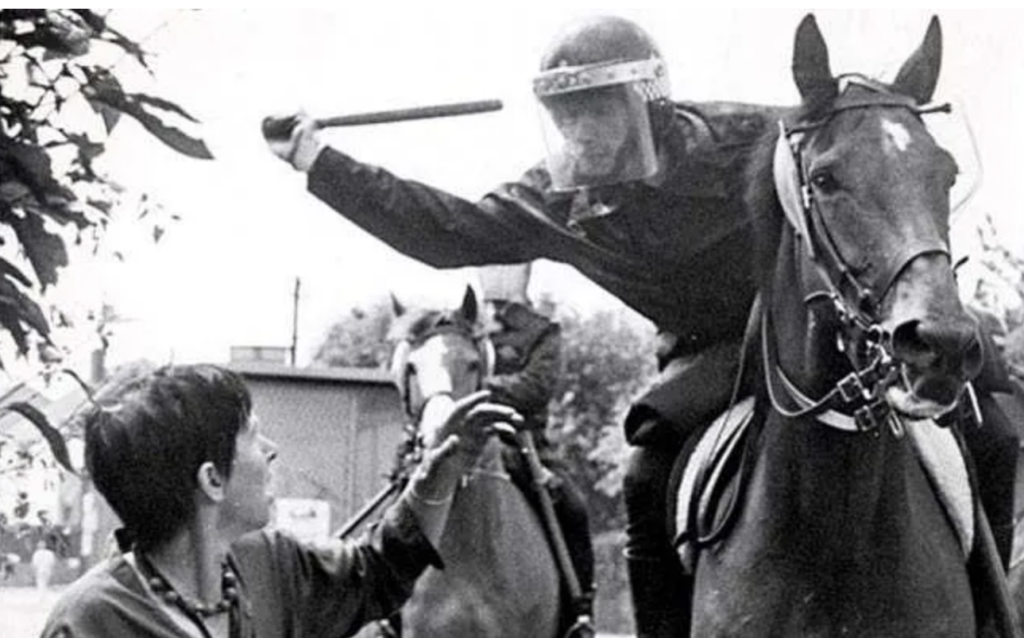

Dr Jowitt continued to address of the tension between the law as a social fact, and a moral concept, by elaborating on the principles of the Radbruch formula. Gustav Radbruch argued that the law represents a balance between legal certainty, purposiveness and justice. Returning to the image, Jowett argued it captured a moment where legal certainty (The permission for police to forcefully resist protestors), and purposiveness (preventing disruptive protests) were at odds with justice. For Radbruch, where statutory law protects intolerable injustice, it forfeits its own validity.

Part 2: Organoids

Having established that the law should be measured against moral principles rather than a closed-system of facts, Jowitt turned to contemporary developments in biotechnology, and artificial intelligence. He drew attention to the increasing use of human stem-cells to create living organoids. Organoids are in vitro tissues grown from stem-cells, or organ cells, which function similarly to an internal organ. Recently, the Nuffield Council of Bioethics permitted lab-grown brain tissue to be used in medical and computing science. It sounds like something taken from a dystopian movie, but human brain cells can be used as processing pieces in computers, and for drug-testing and surgical purposes. Jowitt returned to the legal ethics governing this new technology: If a brain organoid is sentient – surely it deserves legal rights? Assessing sentience is a quagmire in itself, but as a very simplified explanation – this may be done by measuring responsiveness to external stimuli and, while not yet assessed, Jowitt believed that artificial organs would certainly react.

Returning to legal rights – what exactly are they? Through the framework of legal philosopher Wesley Hohfeld, Jowitt considered legal rights to emerge from a regulated legal duty. Whether organoids could hold legal rights opens further questions:

- How far should these rights extend? – Should they be restricted to sentient entities?

- Could there be differing degrees of rights? For example, should an organoid made from brain-cells be held to higher standards than one made from arm tissue?

- Topically, can Artificial Intelligence be entitled to legal rights?

Part 3: Legal personhood

Jowitt explained that a ‘legal personality’ is required to bear rights and duties, while property is the object of those rights. To answer whether legal personality could extend to AI, Jowitt notes that traditional legal frameworks create a conceptual limitation at this point: Legal personalities are normatively inert – Something is defined as a legal person, simply because the law categorises it as such. However, a Hohfeldian perspective can again be applied – rather than focusing on whether AI can hold rights, it may be more appropriate to ask if it can bear duties.

To illustrate this point, Jowitt turned to a new case study – ‘DABUS’, an AI algorithm designed to simulate human creativity and create patentable inventions. After DABUS had invented a faster microwave design, its inventor named the bot as the creator. This decision was subsequently taken to court in the UK and Australia, since the bot is not a legal person, hence, incapable of owning the property of a patent. While UK courts prevented AI to have the status of an inventor (Thaler v. Comptroller-General of Patents, Designs and Trademarks [2023] UKSC 49), in Australia, a federal court temporarily granted permission for it to own the innovation’s patent (Thaler v Commissioner of Patents [2021] FCA 879), though this was overturned on appeal (Commissioner of Patents v Thaler [2022] FCAFC 62). Jowitt emphasised the theoretical ramifications of the Australian case: By legally owning the patent for a year, the bot temporarily occupied a role traditionally reserved for legal persons.

Once again, this exposed further conceptual difficulty. Jowitt notes ‘Property is never an absolute ownership status’, so the patent case does not necessarily impose legal personhood upon a bot. He went further – even if AI had a property right, there is no legal reason why a human could not own the AI algorithm itself. In the chain of ownership, a human can still be at the end. Yet, the Australian example indicates that at least one judge believed it possible for AI to assume legal personhood, a transformation in traditional understandings of a ‘legal person’, if only for a year.

A question emerged from the audience: Considering the recent news story of Chat-GPT encouraging suicidal behaviour, if AI, and other human-made technologies are granted legal rights, does this mean they can commit crimes too?

This question had Dr Jowitt stumped for a moment, but he answered by referencing the notion that rights and duties may be separated. For example, children have rights, but not duties – a framework which could also be applied to AI models. Additionally, he mentioned that an algorithm is a network; there is not a single creator, and by extension, locus of responsibility. This is a problem the law needs to contend with, since responsibility should not be extinguished because it is difficult to locate: Self-driving cars exemplify a need for culpability to be traced through intersecting networks of liability. Jowitt emphasised that he was challenged by this question and could only speculate rather than give a conclusive answer.

Dr Jowitt’s lecture managed to walk through complex legal theory making it comprehensible to a an audience of mostly non-theoretical experts. He demonstrated that legal theory proves a useful reference when ethical dilemmas challenge the conceptual foundations of our legal system. The possibility for non-human entities to have a tangible influence, for good (i.e. DABUS), or bad (committing crimes), suggests legal culpability must be traced, potentially reshaping the traditional definition of what a ‘legal person’ may be.

This question had Dr Jowitt stumped for a moment, but he answered by referencing notion that rights and duties may be separated. For example, children have rights but not duties, and Jowitt argued this framework may be applied to AI models. Additionally, Jowitt mentioned that the algorithm itself is a network; there is not a single creator, and by extension, locus of responsibility. This is something the law needs to contend with since responsibility should not be extinguished because it is difficult to locate: He noted self-driving cars as an example of where culpability will have to be traced through intersecting networks. Jowitt emphasised that he was challenged by this question and could only speculate rather than give a conclusive answer.

Many thanks to Clemency Fisher for heading over to South Bank for a lecture on this important and challenging topic.

Clemency is a GDL student who studied Archaeology before converting to law. She loves modern art, archaeology, and politics, and is interested in pursuing Criminal or Public Law after the GDL. Outside her studies, she enjoys running, visiting art galleries, and watching The Traitors. Clemency is a member of the 2025-26 Lawbore Journalist Team.